From 2023 to 2026 I worked as a Staff Systems Engineer on Google's Trusted Partner Cloud project, which shipped in France in December 2025 as the Google Cloud Dedicated (GCD) product, operated by S3NS. My role was site reliability for a sovereign cloud, which is a slightly odd thing to do, because a sovereign cloud has two sets of customers and you have to sit between them.

On one side: the Google software engineers who built and operate Google Cloud and its infrastructure. They are fluent in their systems, comfortable at hyperscale and used to a model where SRE and software engineering cooperate to run things.

On the other side: a partner organisation that was going to run a sovereign instance of that cloud independently, in country, without depending on Google for most things. The partner had to build the operational knowledge, staffing model and institutional muscle needed to run that environment independently; helping make that possible was the project.

It helped that I'd joined this project at Google as a new hire. I knew nothing about how Google Cloud was implemented when I started; none of the services, acronyms or day-to-day rhythms. The partner would be on the same learning curve a little later and I made a point of staying near them on it. Going too deep on any one service would have cost me the outsider's perspective I needed to keep.

Most of what I did was bridging. Operational processes, incident discussion, tool readiness, training material and walking in the partner's footsteps before the handoff. Another way to say it is that we were helping define the operational surface a sovereign operator would use. Bridging isn't glamorous, but it's where sovereign cloud operations actually lives.

The interesting thing about the work — and the reason I've been thinking about it three months after leaving Google — is that it quickly reveals that "sovereignty" is a political and regulatory ask that breaks down, operationally, into three hard engineering questions. I didn't design the answers. I worked on the practical operational side and watched the questions surface.

The regulatory ask

The political and regulatory language for sovereignty tends to focus on data residency, control and independence from foreign jurisdictions. Those are real concerns and they have real answers for physical location, key management, contractual structure and qualification frameworks like France's SecNumCloud. The trusted cloud announcement S3NS made in December 2025 has all of that.

There's a second-order question hiding underneath, though. The operational model is public: S3NS personnel run day-to-day operations, with only limited and supervised assistance from Google, and Google Cloud Dedicated is designed so the partner can continue running for up to twelve months if the connection to Google is severed (per Google's sovereignty post). If a sovereign cloud is to be operated independently of the technology provider at that depth, someone — a different someone — has to do the operating. And they have to do it well enough to keep the customers happy and the regulators satisfied. That's no longer a contractual problem, it's an SRE problem.

One of the lead SREs described the work early on as creating an operational surface inside Google Cloud that had not needed to exist in that form before.

That stuck with me. Large systems have plenty of internal tools, APIs, dashboards, playbooks and escalation paths, but they are usually shaped around the organisation that already operates them. Sovereign operation puts a new boundary in the middle. A different operator needs a surface they can use: what to look at, what to touch, what to escalate, what not to touch, and how to know the difference.

That is part of why this is an SRE problem. It is not only a matter of handing over documentation. It is the retroactive creation of an operational interface for a system whose original operators were inside the same company as the people who built it.

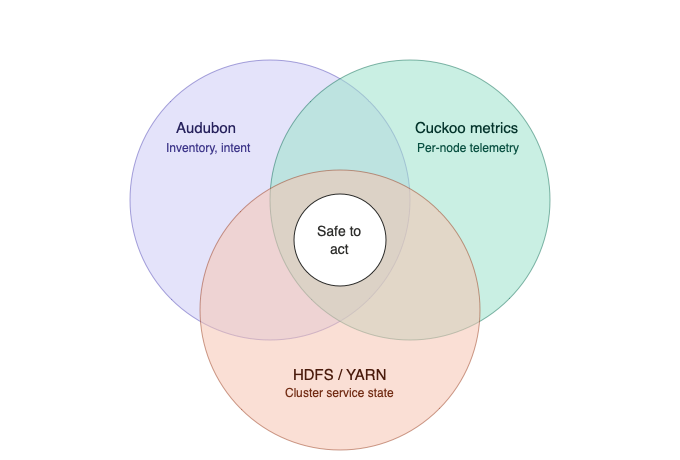

It breaks down into three questions that all happen to be hard at once.

The three questions

1. The SLA question. Can you write a defensible SLA for a sovereign instance of a hyperscaler cloud? In this case the service is regional: S3NS's Cloud de Confiance runs as a single region (u-france-east1) with three zones, and features that require multiple regions are not part of the offering. The engineering question is whether the availability promise, failure domains, support model and operator capacity all line up.

2. The staffing question. Can you operate it with a reasonable number of people? The actual scope is large: many cloud services, a stack too big for any one person to hold in their head. Hyperscaler operations evolved with service developers nearby, and operational load reflects that proximity. A partner-operated sovereign team inherits much of that operational shape, but not the same day-to-day proximity to the service developers.

3. The complexity question. Can the operators manage the complexity at all? Google Cloud is large: many services, many teams and many conventions. A sovereign team starting from outside does not inherit that specific institutional context. The complexity doesn't reduce just because the team did.

These three questions form a loop:

- Complexity → Staffing: expertise doesn't scale by hiring fast.

- Staffing → SLA: you can't defend a target you don't have the people to defend.

- SLA → Complexity: you have to mitigate across the full surface area to keep the SLA honest.

Pull on one, the other two move.

What bridging looked like

The practical operational work sat inside those three questions. It also sat inside that new operational surface: what the partner would see, use, trust and escalate through. It came in a few forms.

Processes and playbooks. The Google side operates by playbooks that assume certain context — that you know what the service is, that you have the tools, that you know which escalation path to take. Most of those assumptions don't hold for a partner just learning the service. Part of this was reading playbooks with fresh eyes, finding the steps that didn't make sense without context and working with service teams to fix them. A playbook that was written for an expert service team likely doesn't work for a new operator.

Tools. I spent a substantial fraction of the project on tooling readiness, because the partner couldn't operate without the oncall tools. There wasn't a single source of truth for the oncall SRE tooling surface, so part of the work was building one. Mapping the tooling surface a partner-operated environment actually needs is months of work. It is the unglamorous floor of operational sovereignty. If everything else goes wrong, the tools still need to work on the console.

Training and explanation. Teaching a deep stack to a new team is hard work. Doing it across two organisations, mostly remotely, with up to nine timezones in play, is harder. Documentation alone doesn't carry the cross-cutting questions, and synchronous sessions only ever work for some of the people who should attend. Cross-organisation, cross-timezone technical education at scale is a problem in its own right.

Walking in the operators' footsteps, as early as possible. We set up an oncall rotation where we stood in the partner's eventual position, taking the same pages they would later take. Same alerts, same playbooks. The difference was that we could escalate to the developers, surface what was missing and feed it back before the partner took that scope. This is where all three questions — SLA, staffing, complexity — met operational reality. It was also a test of the operational interface: whether the things a future operator would see were enough to let them act. The earlier the rotation runs, the more it can surface ahead of the people who'll inherit the load. It's a transferable practice for any operational handoff at scale.

Measuring the partnership

The partnership is a contract on paper. Day to day, it's also an operational relationship with seams where one side has to respond to the other. The same translation move that runs through this post applies here: contract terms, however precise, have to be translated into SLOs — and the queries and dashboards behind them — before they can be operated against.

That part is mostly mechanical. The harder part is that the measurement crosses an organisational boundary. Both sides need equal access to the same measurements, computed the same way, for two reasons: trust, because a partnership runs on shared numbers, not delegated ones; and equity, because the partner has to be able to own and defend the metrics.

Working out how to make the operational relationship between partners measurable is itself one of the SRE problems sovereignty creates. The measurement has to be transparent, bidirectional and based on shared definitions.

What bridging doesn't fix

I want to be honest about what this work could and couldn't address.

The work is mostly about reducing the gap between what the inherited system assumes and what the new operators can actually do. It moves the share of incidents and tickets a sovereign team can handle on their own. It doesn't move the structural constraints.

The single-region topology cannot be bridged to a multi-region failure model. The complexity of the underlying stack is what it is; you can simplify the operator's view but you can't remove the services. Some operational patterns inherit assumptions from a larger team with a deeper bench; you can tune individual alerts but the underlying rate of unusual events doesn't drop. This work makes the system operable. It doesn't make it small.

There's a sharper limit too. The mitigation toolkit available to any SRE role depends on what that role is structurally permitted to do. The operational contract between hyperscaler and sovereign operator has to be honest about the asymmetry: even mitigation is structurally different on the two sides of the seam and some of what one side considers routine routes upstream from the other.

Sovereignty requires these constraints in order to have independence from the technology provider in the day-to-day, with a clearly defined seam where deep changes still come from the people who wrote the code.

Thoughts

I joined this project after the high-level design had been set by other SREs, and most of what I did was practical operational work inside that design rather than architectural decisions about it. So the framing above is a participant's view, not an architect's. It's what the questions looked like from the bridge.

What I'm thinking about right now is how widely this shape is going to apply. Sovereignty as a category is broadening: sovereign clouds, partner-operated regional clouds, the AI cloud partnerships now forming between hyperscalers, neoclouds and AI labs. Every one of those arrangements is, at some level, a system designed for one operational model being run by people from a different one.

The three questions don't go away, they just show up in different vocabulary. That is what makes sovereignty an SRE problem, not just a regulatory one.

Permalink